F1Tenth

One tenth the scale. Ten times the fun.

Overview

The goal of F1Tenth is build an 1/10th scale F1 car capable of racing autonmously around a track and avoid static obstacles. For this, we will be building the entire racing stack (both software and hardware). The hardware will include mechanical work in the form of modifying a chassis from an prebought RC car and also electrical work in power distrubution, battery management systems, and sensor integration / communication with the main compute node. Additionally, a lot of software work will be needed to interpret and respond to the environment (obstacles and the track itself).

How to Join

Just message us! No experience is necessary to join and students of all backgrounds are welcome. This includes (but is not limited to) computer engineering, mechanical engineering, computer science, electrical engineering. See our contact page for how to reach us.

Project Details

Our project consists of multiple different sections including hardware, object detection, and software with the overarching goal being to map out a floor plan and then use the plan to drive around without human input.

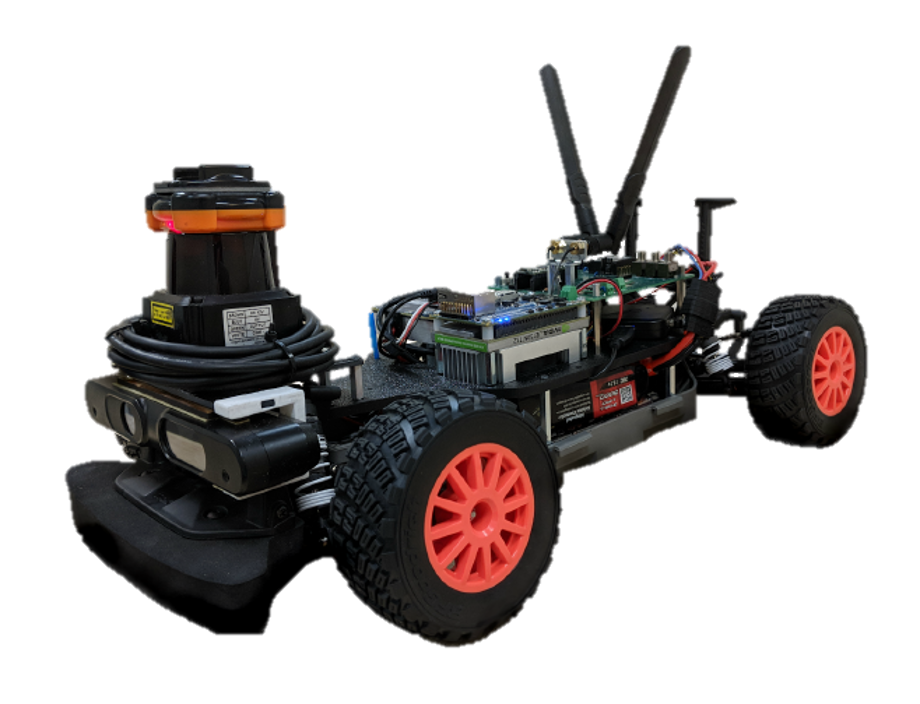

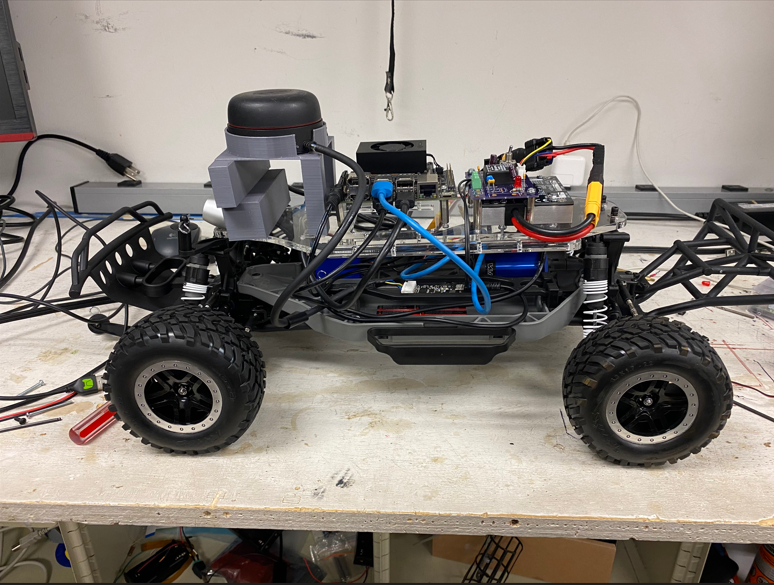

Hardware

The hardware section consists of setting up the chassis and wiring for a prebuilt car, 3d printing necessary mounts and components to hold the car together, and much more. Much of the work for this section consists of setting up the necessary tools for the bot that allow it to navigate properly. This includes setting up the camera (a combination of an Intel D400 and T235), setting up the battery and power for the car, and making necessary changes to the chassis for optimal navigation. A lot of the work on this consists of 3d printing and wiring, so if you have experience in these skills or are looking to learn, this is a great way to start.

Software & ROS

The role of the software team is to develop, test and integrate a working code base on the Robotics Operating System (ROS) platform to facilitate communication between all the functional nodes of operation on the car. The main modules involved in the development of our software are vision and lidar perception, motion/path planning, localization and controls. Lastly, we work with our hardware team to successfully integrate our system on a low resource, embedded platform that gives us enough freedom to implement a highly customizable framework with limited resources.

Object Detection

The object detection section consists of creating and designing algorithms that can take a point field from RTABMAP and convert this field into useful information for the car to make decisions on where to go. This section is the most open ended of the three, as any number of methods can be utilized to complete this task. Most of the work in this section consists of machine learning or algorithm design.